The way to pace up web site migrations with AI-powered redirect mapping

[ad_1]

Migrating a big web site is all the time daunting. Huge visitors is at stake amongst many transferring components, technical challenges and stakeholder administration.

Traditionally, one of the crucial onerous duties in a migration plan has been redirect mapping. The painstaking means of matching URLs in your present web site to the equal model on the brand new web site.

Thankfully, this job that beforehand may contain groups of individuals combing by means of 1000’s of URLs might be drastically sped up with trendy AI fashions.

Must you use AI for redirect mapping?

The time period “AI” has develop into somebody conflated with “ChatGPT” over the past 12 months, so to be very clear from the outset, we aren’t speaking about utilizing generative AI/LLM-based methods to do your redirect mapping.

Whereas there are some duties that instruments like ChatGPT can help you with, corresponding to writing that tough regex for the redirect logic, the generative aspect that may trigger hallucinations may probably create accuracy points for us.

Benefits of utilizing AI for redirect mapping

Pace

The first benefit of utilizing AI for redirect mapping is the sheer pace at which it may be executed. An preliminary map of 10,000 URLs might be produced inside a couple of minutes and human-reviewed inside a couple of hours. Doing this course of manually for a single individual would often be days of labor.

Scalability

Utilizing AI to assist map redirects is a technique you should use on a web site with 100 URLs or over 1,000,000. Massive websites additionally are typically extra programmatic or templated, making similarity matching extra correct with these instruments.

Effectivity

For bigger websites, a multi-person job can simply be dealt with by a single individual with the right data, liberating up colleagues to help with different components of the migration.

Accuracy

Whereas the automated methodology will get some redirects “fallacious,” in my expertise, the general accuracy of redirects has been greater, because the output can specify the similarity of the match, giving handbook reviewers a information on the place their consideration is most wanted

Disadvantages of utilizing AI for redirect mapping

Over-reliance

Utilizing automation instruments could make individuals complacent and over-reliant on the output. With such an vital job, a human evaluate is all the time required.

Coaching

The script is pre-written and the method is easy. Nonetheless, will probably be new to many individuals and environments corresponding to Google Colab might be intimidating.

Output variance

Whereas the output is deterministic, the fashions will carry out higher on sure websites than others. Typically, the output can include “foolish” errors, that are apparent for a human to identify however tougher for a machine.

A step-by-step information for URL mapping with AI

By the tip of this course of, we’re aiming to supply a spreadsheet that lists “from” and “to” URLs by mapping the origin URLs on our dwell web site to the vacation spot URLs on our staging (new) web site.

For this instance, to maintain issues easy, we are going to simply be mapping our HTML pages, not extra belongings corresponding to CSS or pictures, though that is additionally doable.

Instruments we’ll be utilizing

- Screaming Frog Web site Crawler: A robust and versatile web site crawler, Screaming Frog is how we acquire the URLs and related metadata we’d like for the matching.

- Google Colab: A free cloud service that makes use of a Jupyter pocket book surroundings, permitting you to run a spread of languages instantly out of your browser with out having to put in something regionally. Google Colab is how we’re going to run our Python scripts to carry out the URL matching.

- Automated Redirect Matchmaker for Web site Migrations: The Python script by Daniel Emery that we’ll be operating in Colab.

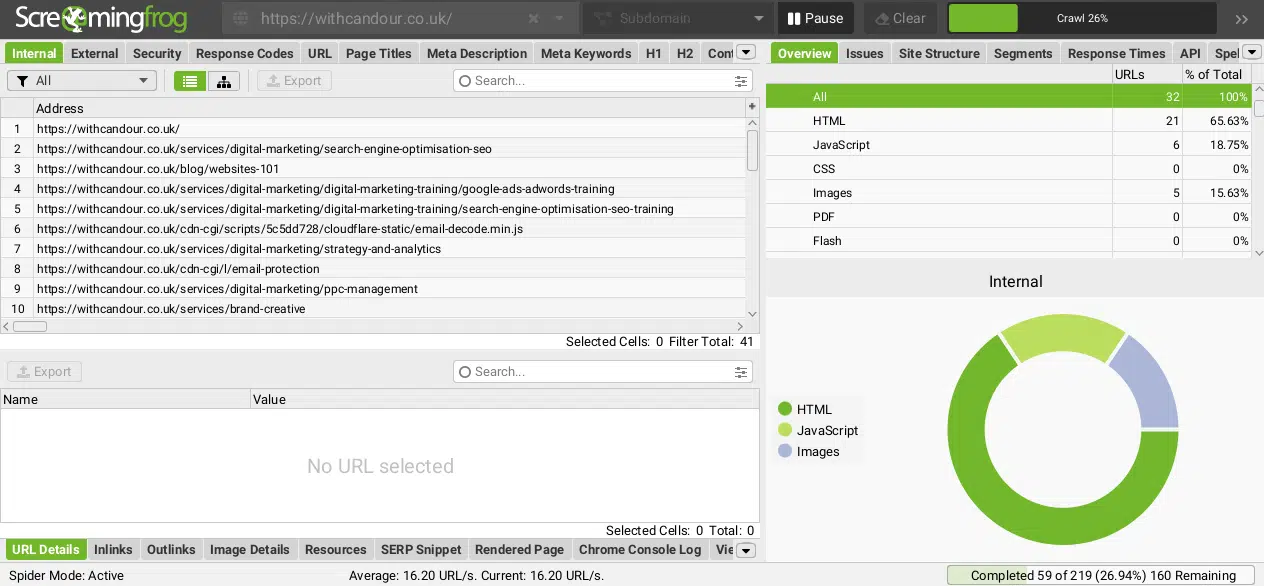

Step 1: Crawl your dwell web site with Screaming Frog

You’ll must carry out a normal crawl in your web site. Relying on how your web site is constructed, this may occasionally or might not require a JavaScript crawl. The purpose is to supply an inventory of as many accessible pages in your web site as doable.

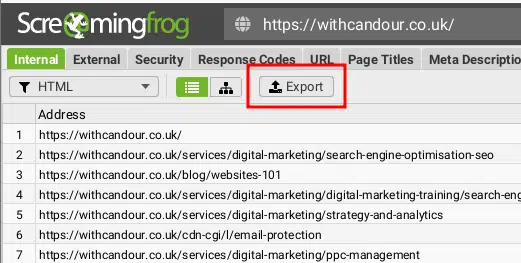

Step 2: Export HTML pages with 200 Standing Code

As soon as the crawl has been accomplished, we wish to export all the discovered HTML URLs with a 200 Standing Code.

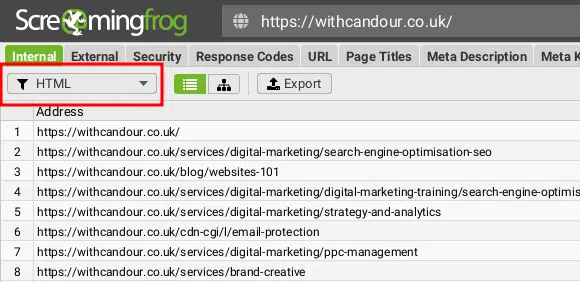

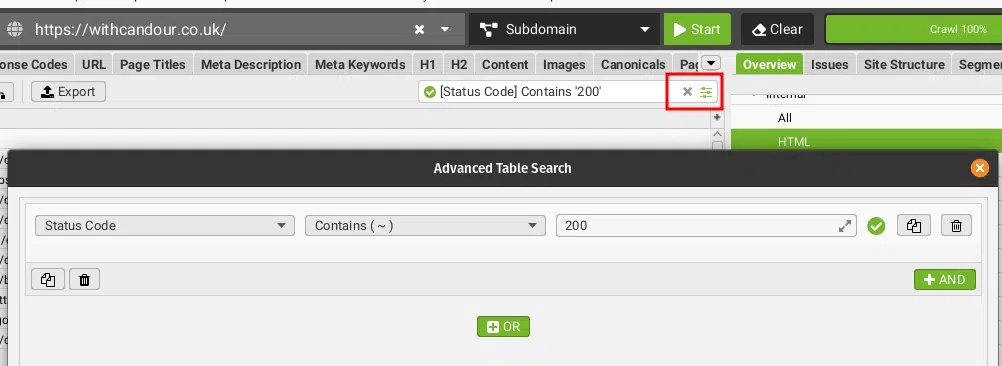

Firstly, within the prime left-hand nook, we have to choose “HTML” from the drop-down menu.

Subsequent, click on the sliders filter icon within the prime proper and create a filter for Standing Codes containing 200.

Lastly, click on on Export to avoid wasting this knowledge as a CSV.

It will give you an inventory of our present dwell URLs and all the default metadata Screaming Frog collects about them, corresponding to Titles and Header Tags. Save this file as origin.csv.

Essential notice: Your full migration plan must account for issues corresponding to current 301 redirects and URLs which will get visitors in your web site that aren’t accessible from an preliminary crawl. This information is meant solely to exhibit a part of this URL mapping course of, it’s not an exhaustive information.

Step 3: Repeat steps 1 and a pair of on your staging web site

We now want to assemble the identical knowledge from our staging web site, so we’ve got one thing to check to.

Relying on how your staging web site is secured, it’s possible you’ll want to make use of options corresponding to Screaming Frog’s types authentication if password protected.

As soon as the crawl has accomplished, it’s best to export the information and save this file as vacation spot.csv.

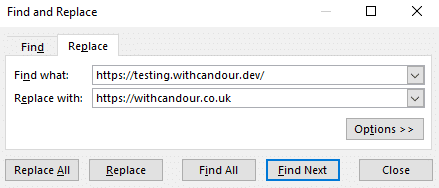

Optionally available: Discover and change your staging web site area or subdomain to match your dwell web site

It’s possible your staging web site is both on a distinct subdomain, TLD and even area that received’t match our precise vacation spot URL. Because of this, I’ll use a Discover and Change perform on my vacation spot.csv to alter the trail to match the ultimate dwell web site subdomain, area or TLD.

For instance:

- My dwell web site is

https://withcandour.co.uk/(origin.csv) - My staging web site is

https://testing.withcandour.dev/(vacation spot.csv) - The location is staying on the identical area; it’s only a redesign with completely different URLs, so I might open vacation spot.csv and discover any occasion of

https://testing.withcandour.devand change it withhttps://withcandour.co.uk.

This additionally means when the redirect map is produced, the output is appropriate and solely the ultimate redirect logic must be written.

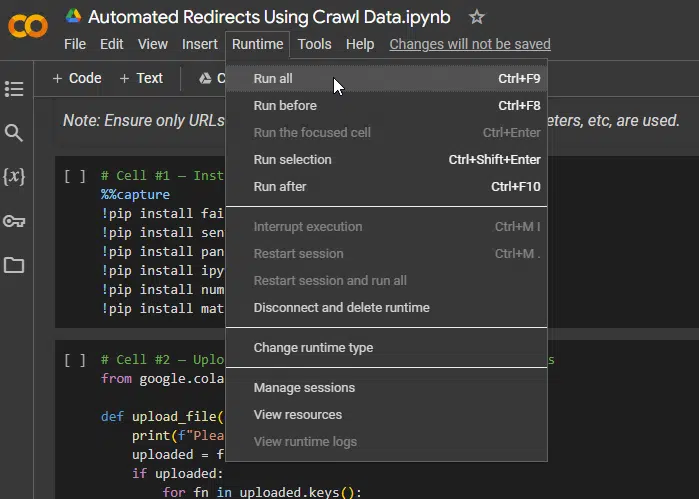

Step 4: Run the Google Colab Python script

Whenever you navigate to the script in your browser, you will notice it’s damaged up into a number of code blocks and hovering over every one will provide you with a”play” icon. That is in case you want to execute one block of code at a time.

Nonetheless, the script will work completely simply executing all the code blocks, which you are able to do by going to the Runtime’menu and deciding on Run all.

There aren’t any stipulations to run the script; it’ll create a cloud surroundings and on the primary execution in your occasion, it’ll take round one minute to put in the required modules.

Every code block can have a small inexperienced tick subsequent to it as soon as it’s full, however the third code block would require your enter to proceed and it’s straightforward to overlook as you’ll possible must scroll right down to see the immediate.

Get the each day e-newsletter search entrepreneurs depend on.

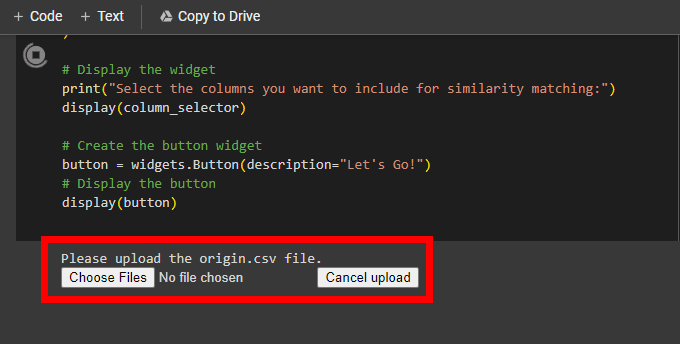

Step 5: Add origin.csv and vacation spot.csv

When prompted, click on Select information and navigate to the place you saved your origin.csv file. Upon getting chosen this file, it’ll add and you can be prompted to do the identical on your vacation spot.csv.

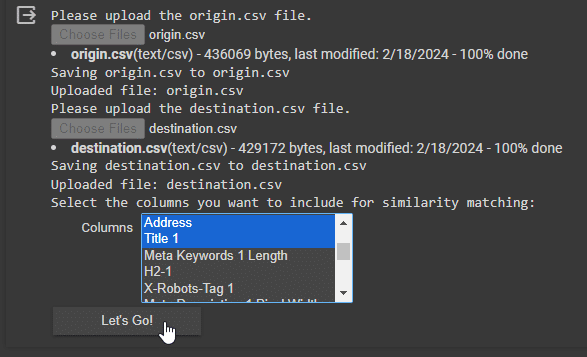

Step 6: Choose fields to make use of for similarity matching

What makes this script notably highly effective is the power to make use of a number of units of metadata on your comparability.

This implies in case you’re in a scenario the place you’re transferring structure the place your URL Deal with shouldn’t be comparable, you possibly can run the similarity algorithm on different elements beneath your management, corresponding to Web page Titles or Headings.

Take a look at each websites and try to decide what you assume are parts that stay pretty constant between them. Usually, I might advise to begin easy and add extra fields in case you are not getting the outcomes you need.

In my instance, we’ve got saved the same URL naming conference, though not equivalent and our web page titles stay constant as we’re copying the content material over.

Choose the weather you to make use of and click on the Let’s Go!

Step 7: Watch the magic

The script’s principal parts are all-MiniLM-L6-v2 and FAISS, however what are they and what are they doing?

all-MiniLM-L6-v2 is a small and environment friendly mannequin inside the Microsoft sequence of MiniLM fashions that are designed for pure language processing duties (NLP). MiniLM goes to transform our textual content knowledge we’ve given it into numerical vectors that seize their which means.

These vectors then allow the similarity search, carried out by Fb AI Similarity Search (FAISS), a library developed by Fb AI Analysis for environment friendly similarity search and clustering of dense vectors. It will rapidly discover our most related content material pairs throughout the dataset.

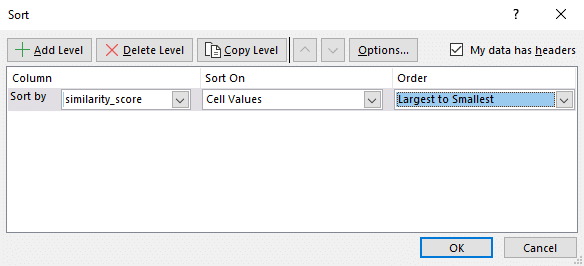

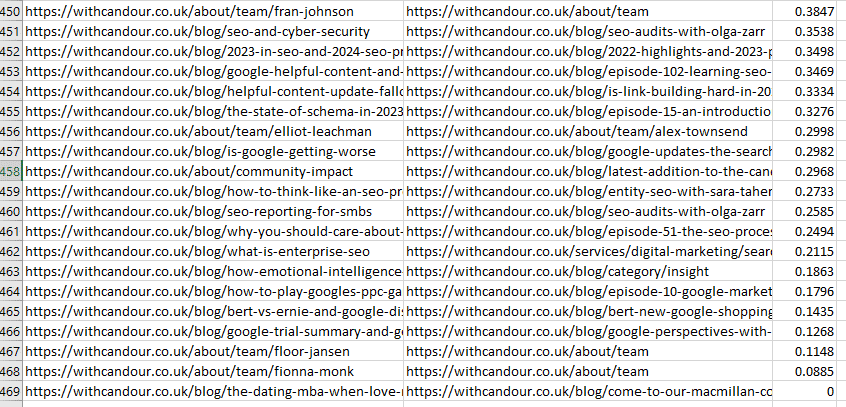

Step 7: Obtain output.csv and type by similarity_score

The output.csv ought to routinely obtain out of your browser. For those who open it, it’s best to have three columns: origin_url, matched_url and similarity_score.

In your favourite spreadsheet software program, I might advocate sorting by similarity_score.

The similarity rating offers you an thought of how good the match is. A similarity rating of 1 suggests an actual match.

By checking my output file, I instantly noticed that roughly 95% of my URLs have a similarity rating of greater than 0.98, so there’s a good probability I’ve saved myself numerous time.

Step 8: Human-validate your outcomes

Pay particular consideration to the bottom similarity scores in your sheet; that is possible the place no good matches might be discovered.

In my instance, there have been some poor matches on the group web page, which led me to find not all the group profiles had but been created on the staging web site – a extremely useful discover.

The script has additionally fairly helpfully given us redirect suggestions for outdated weblog content material we determined to axe and never embody on the brand new web site, however now we’ve got a prompt redirect ought to we wish to go the visitors to one thing associated – that’s finally your name.

Step 9: Tweak and repeat

For those who didn’t get the specified outcomes, I might double-check that the fields you utilize for matching are staying as constant as doable between websites. If not, attempt a distinct area or group of fields and rerun.

Extra AI to come back

Usually, I’ve been sluggish to undertake any AI (particularly generative AI) into the redirect mapping course of, as the price of errors might be excessive, and AI errors can typically be tough to identify.

Nonetheless, from my testing, I’ve discovered these particular AI fashions to be sturdy for this explicit job and it has basically modified how I strategy web site migrations.

Human checking and oversight are nonetheless required, however the period of time saved with the majority of the work means you are able to do a extra thorough and considerate human intervention and end the duty many hours forward of the place you’d often be.

Within the not-too-distant future, I anticipate we’ll see extra particular fashions that can enable us to take extra steps, together with enhancing the pace and effectivity of the subsequent step, the redirect logic.

Opinions expressed on this article are these of the visitor creator and never essentially Search Engine Land. Workers authors are listed right here.

[ad_2]

Source_link