9 Frequent Technical search engine optimisation Points That Really Matter

[ad_1]

On this article, we’ll see easy methods to discover and repair technical search engine optimisation points, however solely these that may critically have an effect on your rankings.

For those who’d prefer to observe alongside, get Ahrefs Webmaster Instruments and Google Search Console (each are free) and examine for the next points.

Indexability is a webpage’s capacity to be listed by search engines like google. Pages that aren’t indexable can’t be displayed on the search engine outcomes pages and might’t usher in any search site visitors.

Three necessities should be met for a web page to be indexable:

- The web page should be crawlable. For those who haven’t blocked Googlebot from getting into the web page robots.txt or you’ve got a web site with fewer than 1,000 pages, you most likely don’t have a problem there.

- The web page should not have a noindex tag (extra on that in a bit).

- The web page should be canonical (i.e., the principle model).

Answer

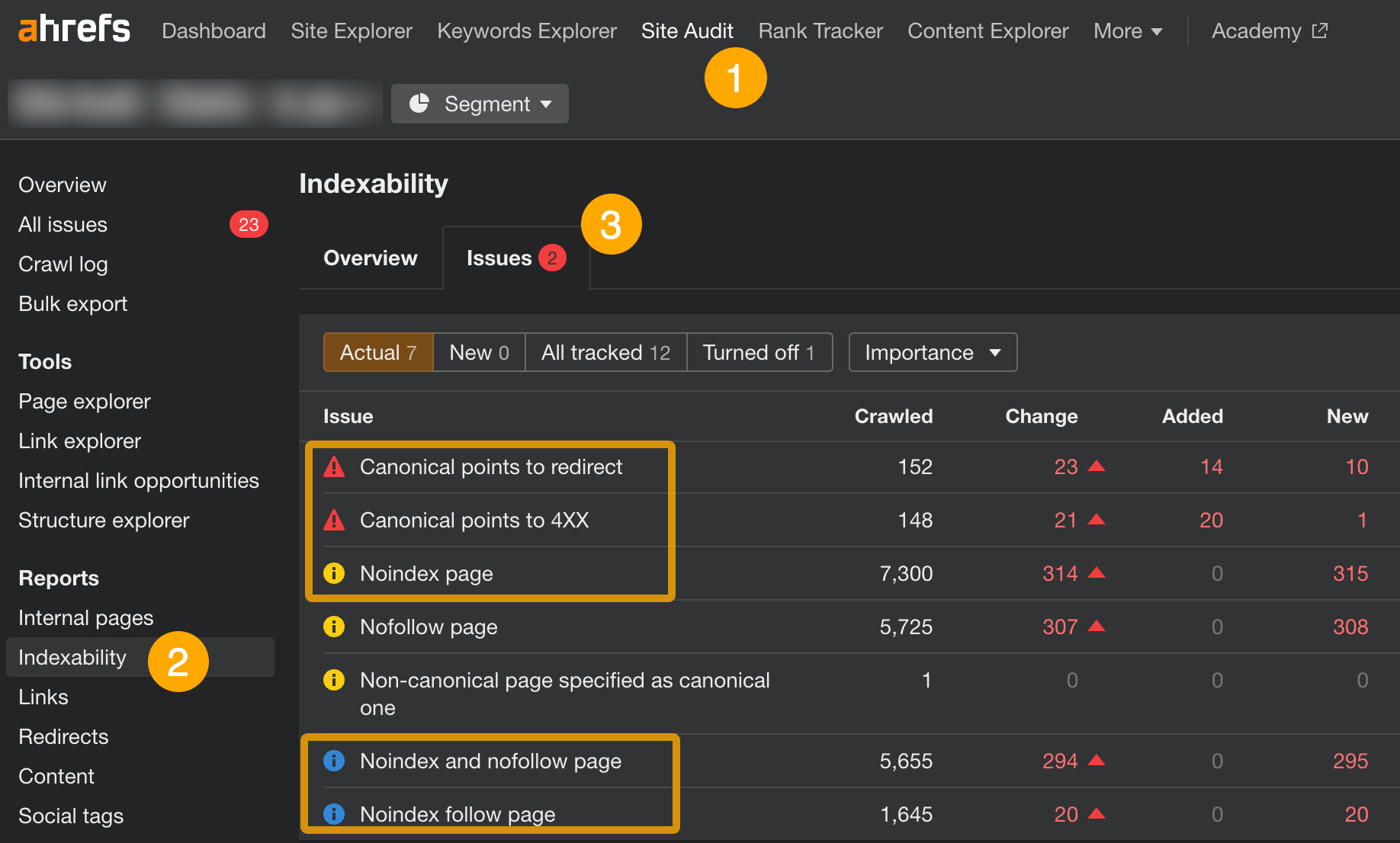

In Ahrefs Webmaster Instruments (AWT):

- Open Website Audit

- Go to the Indexability report

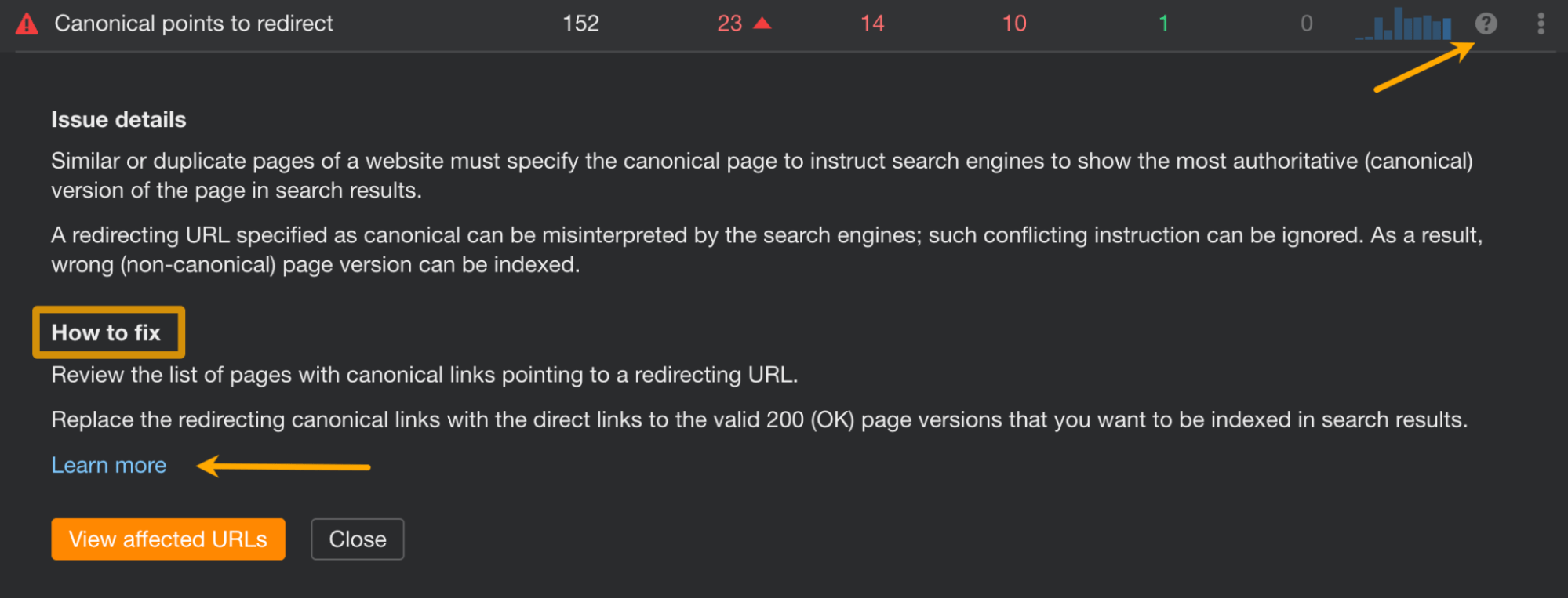

- Click on on points associated to canonicalization and “noindex” to see affected pages

For canonicalization points on this report, you will have to switch dangerous URLs within the hyperlink rel="canonical" tag with legitimate ones (i.e., returning an “HTTP 200 OK”).

As for pages marked by “noindex” points, these are the pages with the “noindex” meta tag positioned inside their code. Chances are high a lot of the pages discovered within the report there ought to keep as is. However in case you see any pages that shouldn’t be there, merely take away the tag. Do be certain that these pages aren’t blocked by robots.txt first.

Advice

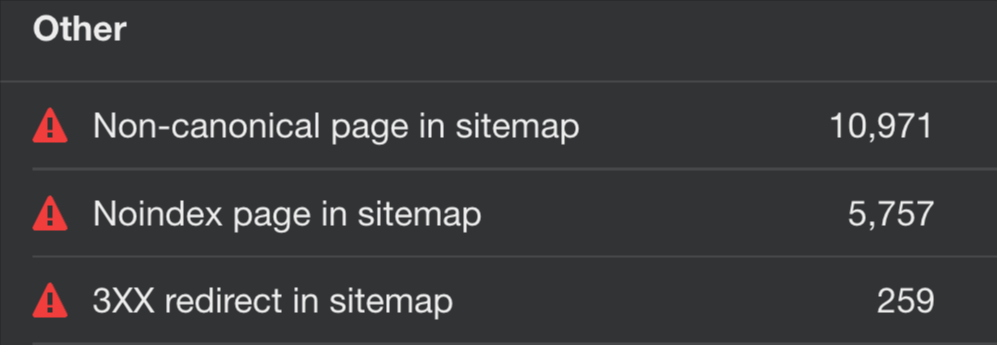

A sitemap ought to include solely pages that you really want search engines like google to index.

When a sitemap isn’t usually up to date or an unreliable generator has been used to make it, a sitemap could begin to present damaged pages, pages that grew to become “noindexed,” pages that had been de-canonicalized, or pages blocked in robots.txt.

Answer

In AWT:

- Open Website Audit

- Go to the All points report

- Click on on points containing the phrase “sitemap” to seek out affected pages

Relying on the problem, you should have to:

- Delete the pages from the sitemap.

- Take away the noindex tag on the pages (if you wish to preserve them within the sitemap).

- Present a sound URL for the reported web page.

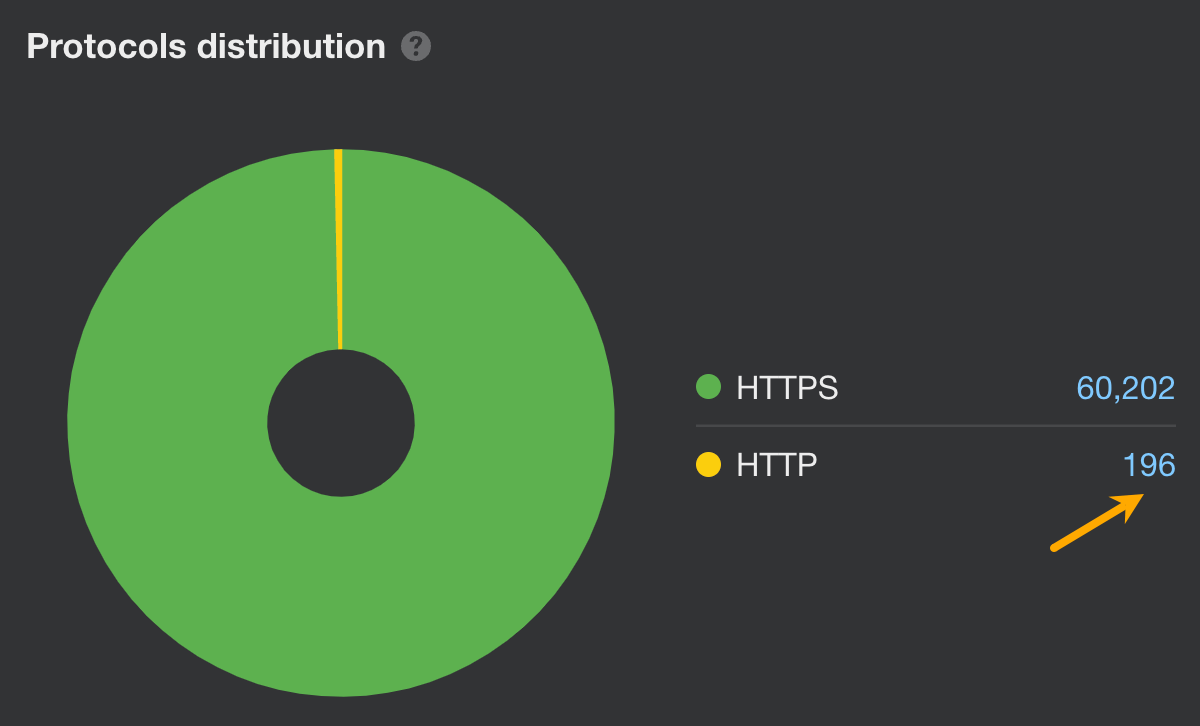

Google makes use of HTTPS encryption as a small rating sign. This implies you may expertise decrease rankings in case you don’t have an SSL or TLS certificates securing your web site.

However even in case you do, some pages and/or assets in your pages should still use the HTTP protocol.

Answer

Assuming you have already got an SSL/TLS certificates for all subdomains (if not, do get one), open AWT and do these:

- Open Website Audit

- Go to the Inside pages report

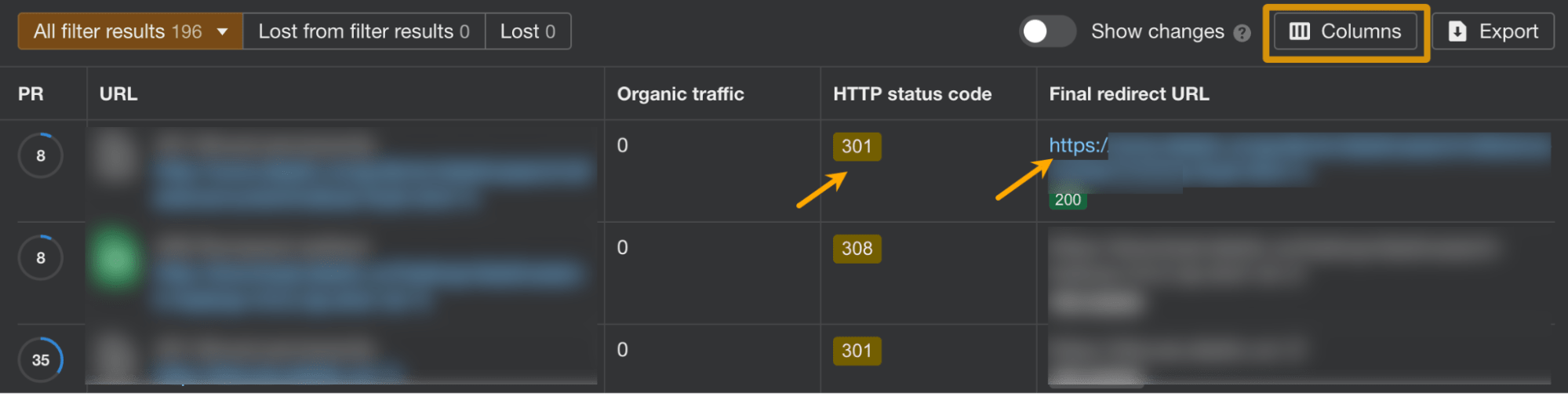

- Have a look at the protocol distribution graph and click on on HTTP to see affected pages

- Contained in the report exhibiting pages, add a column for Last redirect URL

- Make sure that all HTTP pages are completely redirected (301 or 308 redirects) to their HTTPS counterparts

Lastly, let’s examine if any assets on the positioning nonetheless use HTTP:

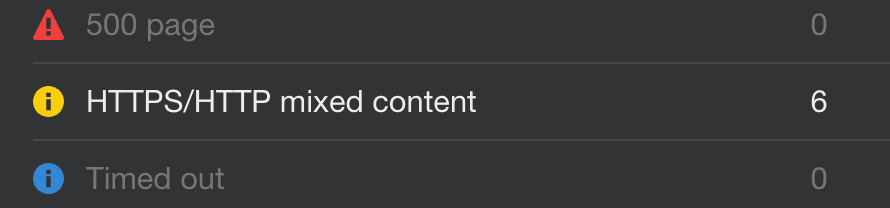

- Contained in the Inside pages report, click on on Points

- Click on on HTTPS/HTTP blended content material to view affected assets

You possibly can repair this subject by certainly one of these strategies:

- Hyperlink to the HTTPS model of the useful resource (examine this selection first)

- Embody the useful resource from a unique host, if accessible

- Obtain and host the content material in your web site straight if you’re legally allowed to do so

- Exclude the useful resource out of your web site altogether

Study extra: What Is HTTPS? The whole lot You Have to Know

Duplicate content material occurs when precise or near-duplicate content material seems on the internet in multiple place.

It’s dangerous for search engine optimisation primarily for 2 causes: It may possibly trigger undesirable URLs to point out in search outcomes and might dilute hyperlink fairness.

Content material duplication just isn’t essentially a case of intentional or unintentional creation of comparable pages. There are different much less apparent causes similar to faceted navigation, monitoring parameters in URLs, or utilizing trailing and non-trailing slashes.

Answer

First, examine in case your web site is out there beneath just one URL. As a result of in case your web site is accessible as:

- http://area.com

- http://www.area.com

- https://area.com

- https://www.area.com

Then Google will see all of these URLs as totally different web sites.

The simplest method to examine if customers can browse just one model of your web site: sort in all 4 variations within the browser, one after the other, hit enter, and see in the event that they get redirected to the grasp model (ideally, the one with HTTPS).

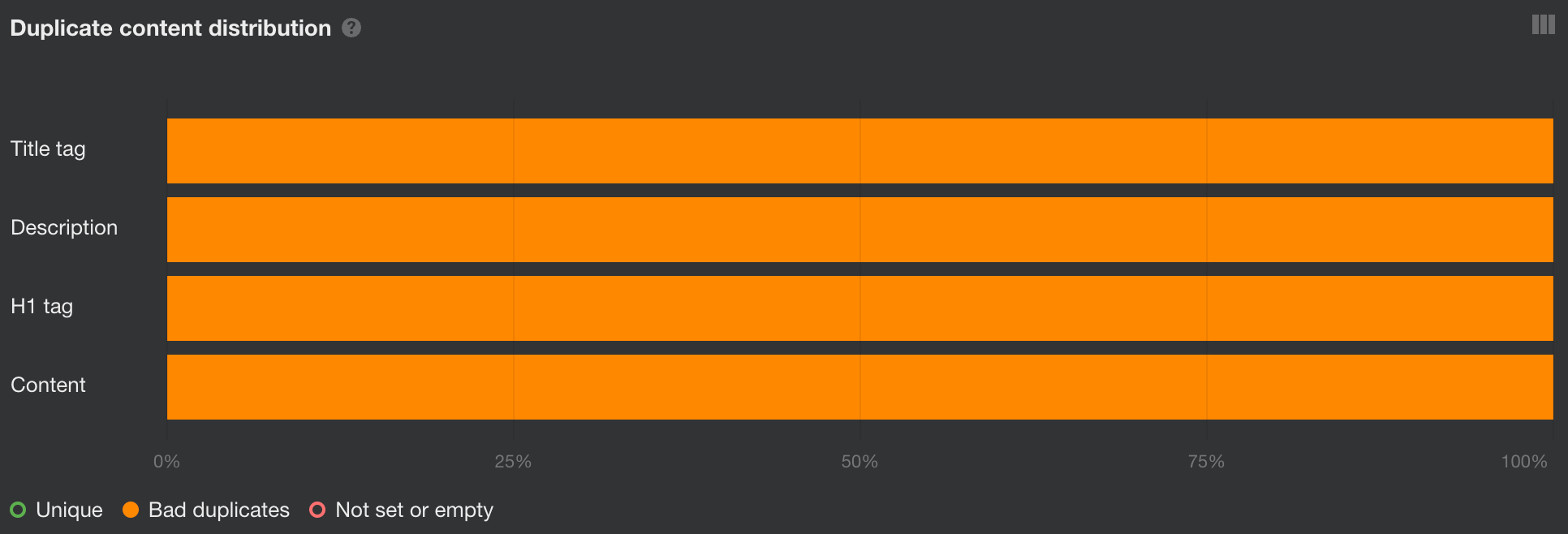

You can even go straight into Website Audit’s Duplicates report. For those who see 100% dangerous duplicates, that’s probably the rationale.

On this case, select one model that may function canonical (probably the one with HTTPS) and completely redirect different variations to it.

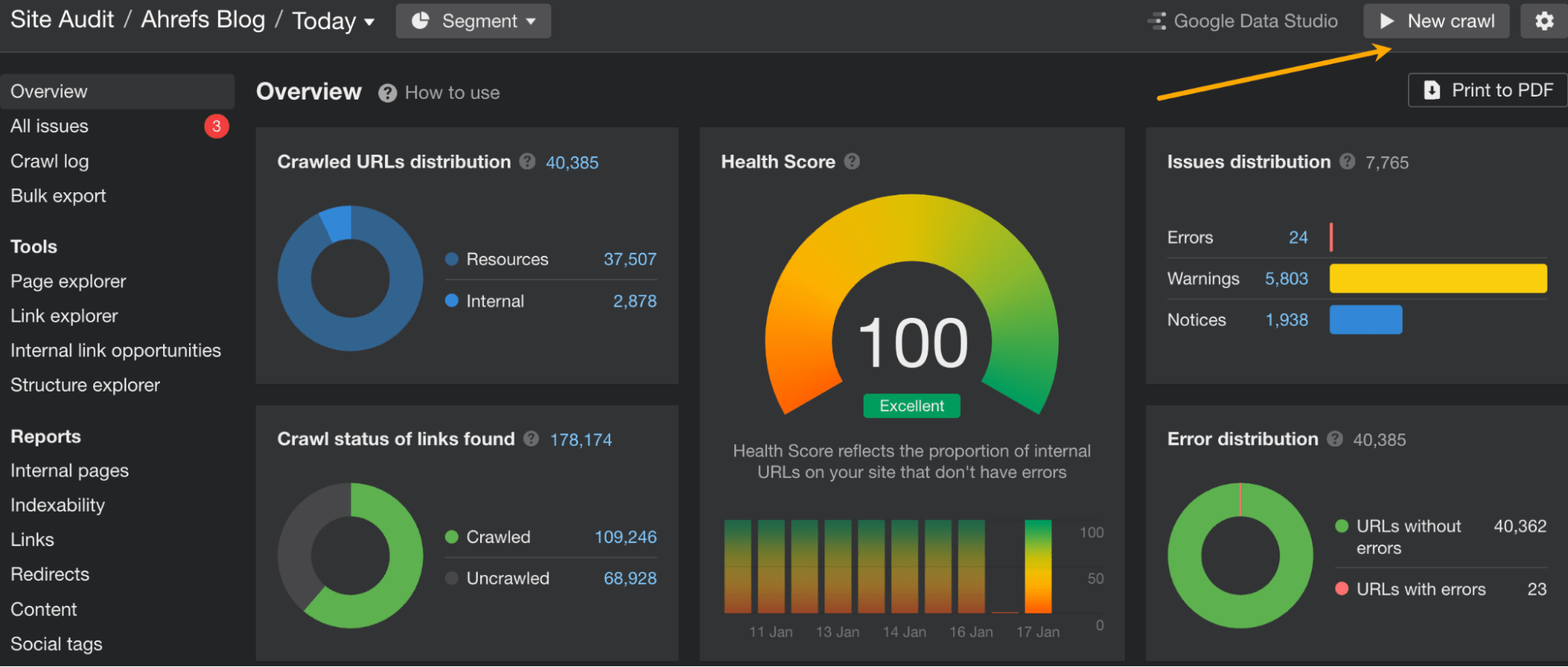

Then run a New crawl in Website Audit to see if there are some other dangerous duplicates left.

There are just a few methods you may deal with dangerous duplicates relying on the case. Learn to resolve them in our information.

Study extra: Duplicate Content material: Why It Occurs and Methods to Repair It

Pages that may’t be discovered (4XX errors) and pages returning server errors (5XX errors) received’t be listed by Google so that they received’t carry you any site visitors.

Moreover, if damaged pages have backlinks pointing to them, all of that hyperlink fairness goes to waste.

Damaged pages are additionally a waste of crawl funds—one thing to be careful for on greater web sites.

Answer

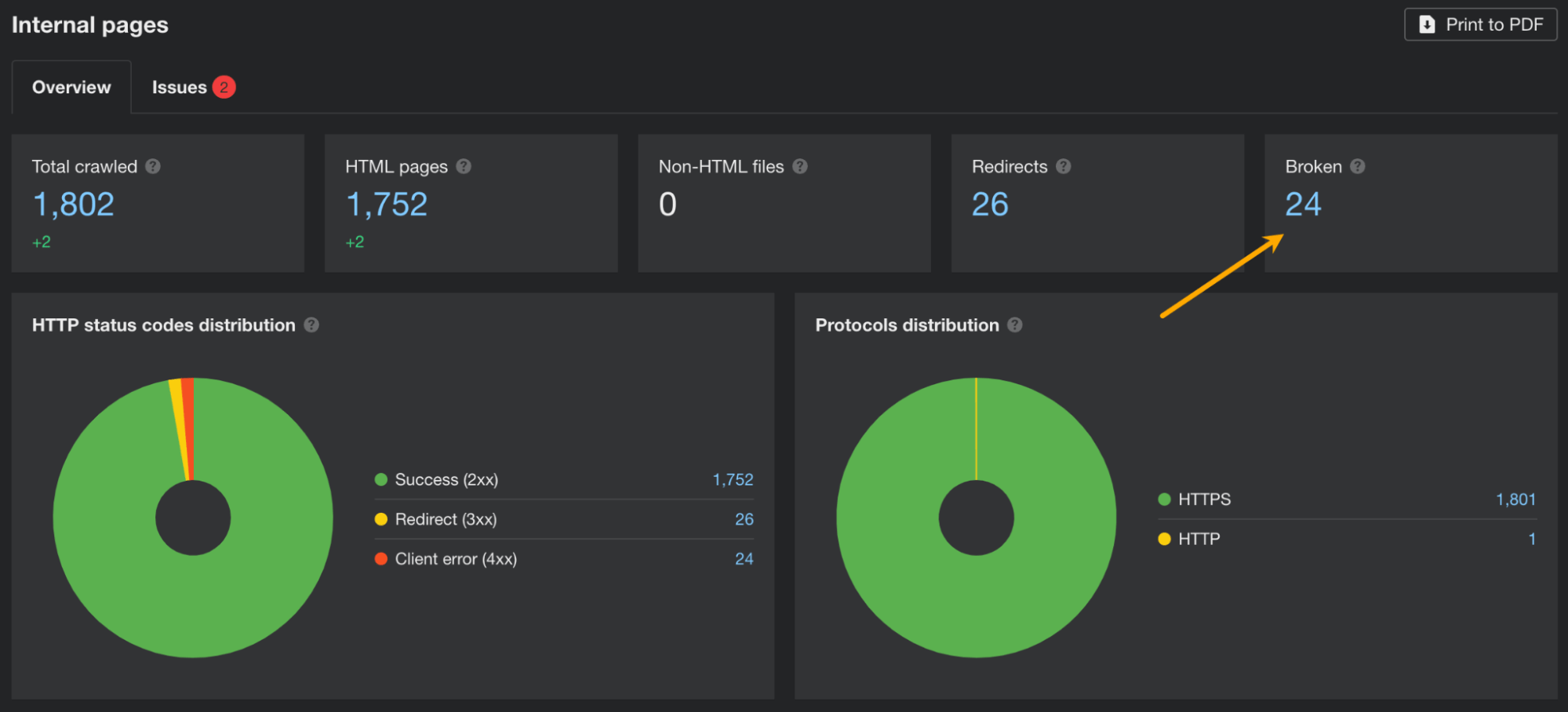

In AWT, you must:

- Open Website Audit.

- Go to the Inside pages report.

- See if there are any damaged pages. In that case, the Damaged part will present a quantity increased than 0. Click on on the quantity to point out affected pages.

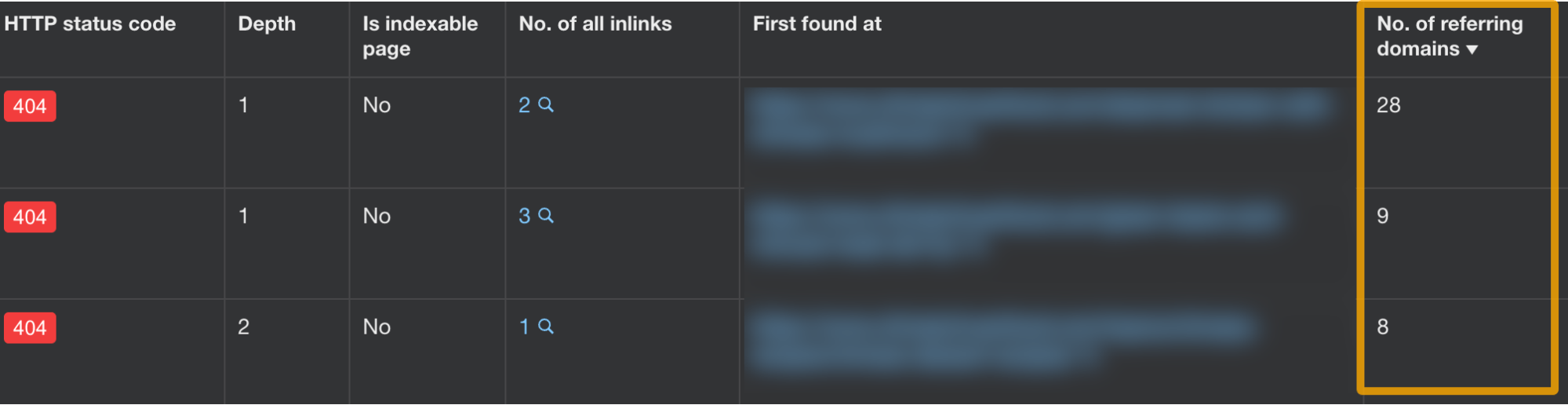

Within the report exhibiting pages with points, it’s a good suggestion so as to add a column for the variety of referring domains. It will enable you to make the choice on easy methods to repair the subject.

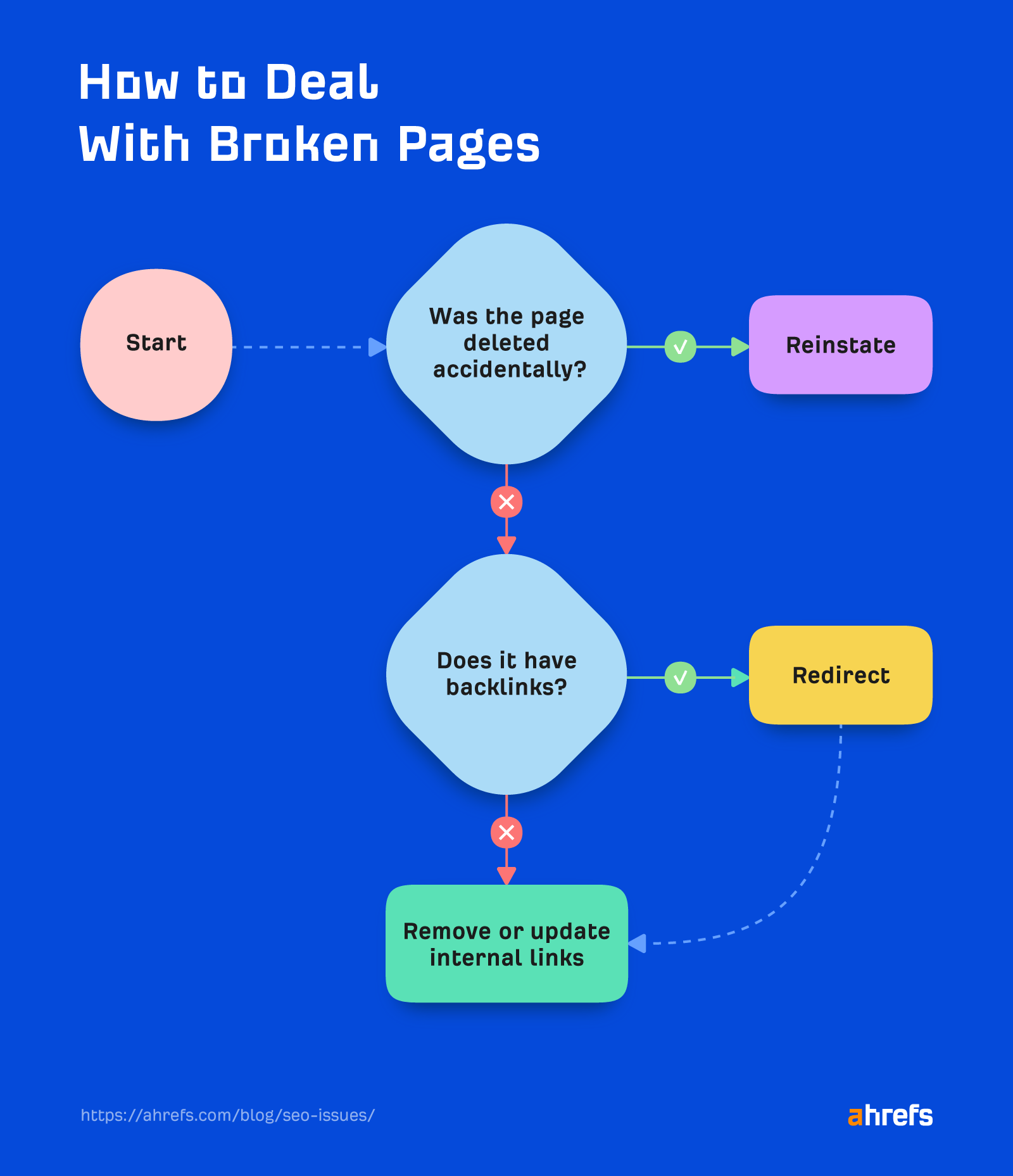

Now, fixing damaged pages (4XX error codes) is sort of easy, however there’s multiple chance. Right here’s a brief graph explaining the method:

Coping with server errors (those reporting a 5XX) generally is a more durable one, as there are totally different attainable causes for a server to be unresponsive. Learn this brief information for troubleshooting.

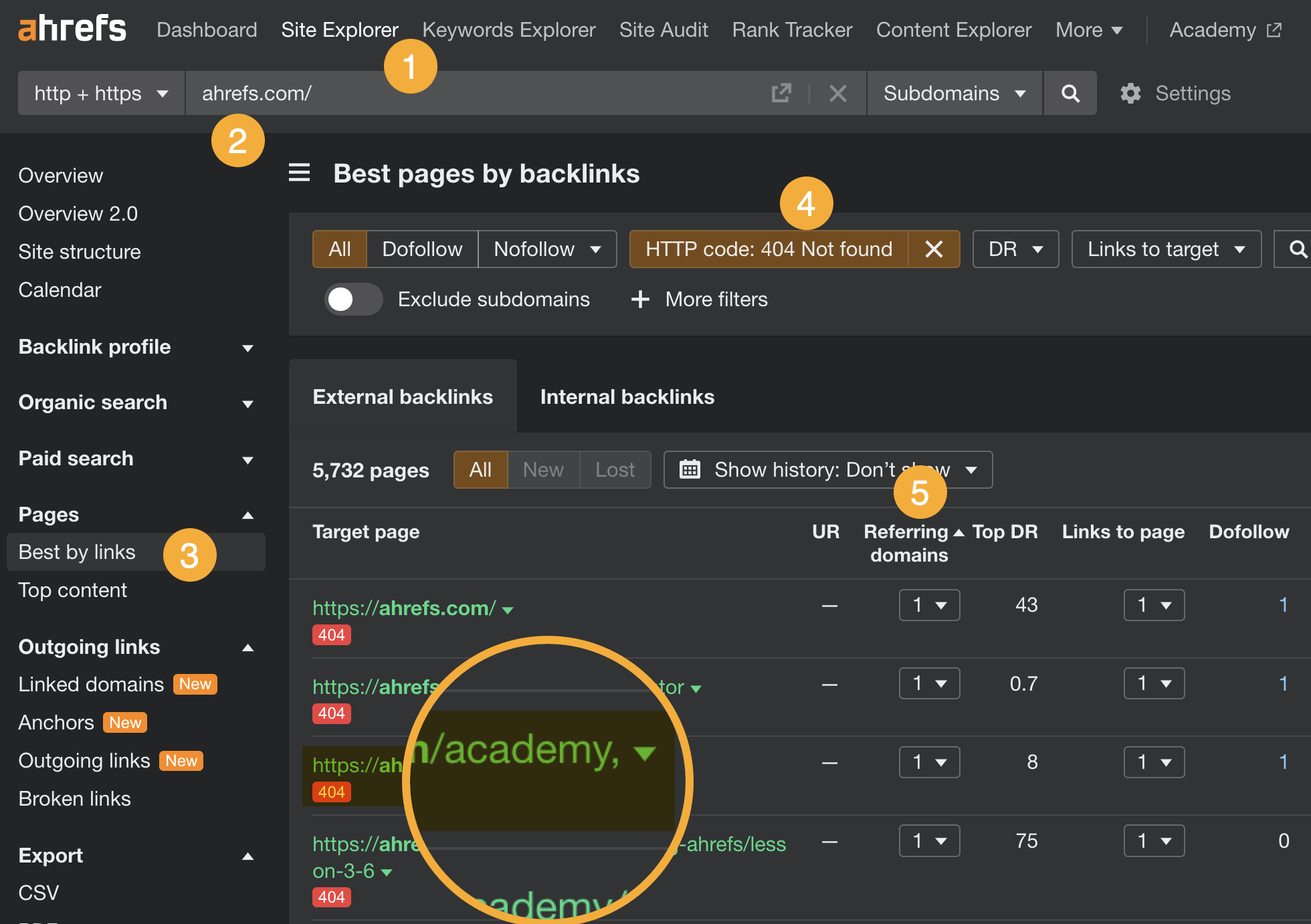

Advice

- Go to Website Explorer

- Enter your area

- Go to the Greatest by hyperlinks report

- Add a “404 not discovered” filter

- Then type the report by referring domains from excessive to low

For those who’ve already handled damaged pages, likelihood is you’ve fastened a lot of the damaged hyperlinks points.

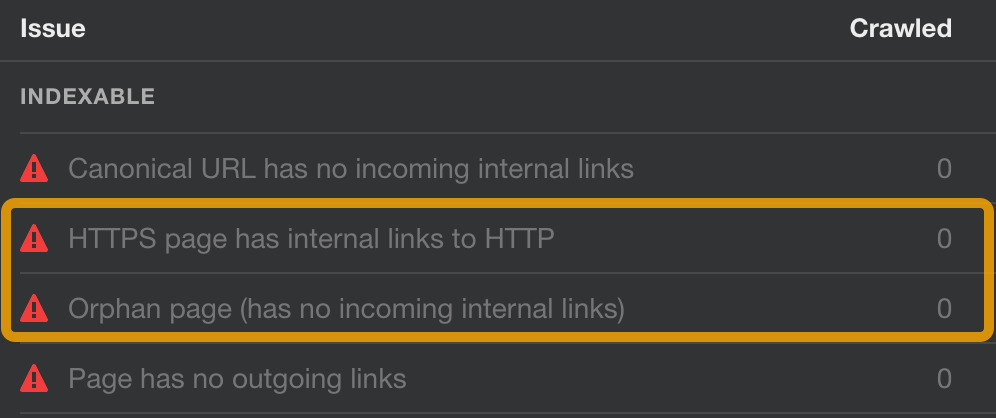

Different essential points associated to hyperlinks are:

- Orphan pages – These are the pages with none inside hyperlinks. Internet crawlers have restricted capacity to entry these pages (solely from sitemap or backlinks), and there’s no hyperlink fairness flowing to them from different pages in your web site. Final however not least, customers received’t be capable of entry this web page from the positioning navigation.

- HTTPS pages linking to inside HTTP pages – If an inside hyperlink in your web site brings customers to an HTTP URL, internet browsers will probably present a warning a few non-secure web page. This will injury your general web site authority and person expertise.

Answer

In AWT, you can:

- Go to Website Audit.

- Open the Hyperlinks report.

- Open the Points tab.

- Search for the next points within the Indexable class. Click on to see affected pages.

Repair the primary subject by altering the hyperlinks from HTTP to HTTPS or just delete these hyperlinks if not wanted.

For the second subject, an orphan web page must be both linked to from another web page in your web site or deleted if a given web page holds no worth to you.

Sidenote.

Ahrefs’ Website Audit can discover orphan pages so long as they’ve backlinks or are included within the sitemap. For a extra thorough seek for this subject, you will have to investigate server logs to seek out orphan pages with hits. Learn how in this information.

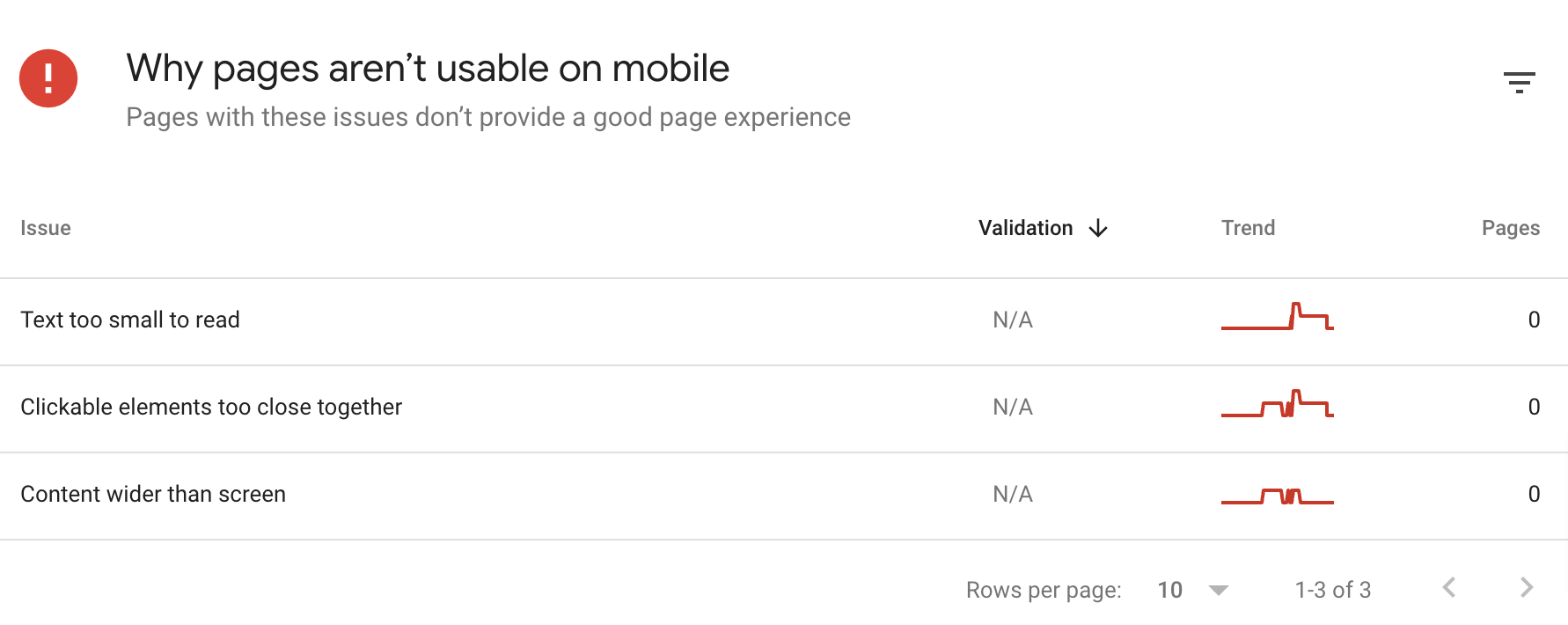

Having a mobile-friendly web site is a should for search engine optimisation. Two causes:

- Google makes use of mobile-first indexing – It’s principally utilizing the content material of cellular pages for indexing and rating.

- Cell expertise is a part of the Web page Expertise indicators – Whereas Google will allegedly all the time “promote” the web page with the most effective content material, web page expertise generally is a tiebreaker for pages providing content material of comparable high quality.

Answer

In GSC:

- Go to the Cell Usability report within the Expertise part

- View affected pages by clicking on points within the Why pages aren’t usable on cellular part

You possibly can learn Google’s information for fixing cellular points right here.

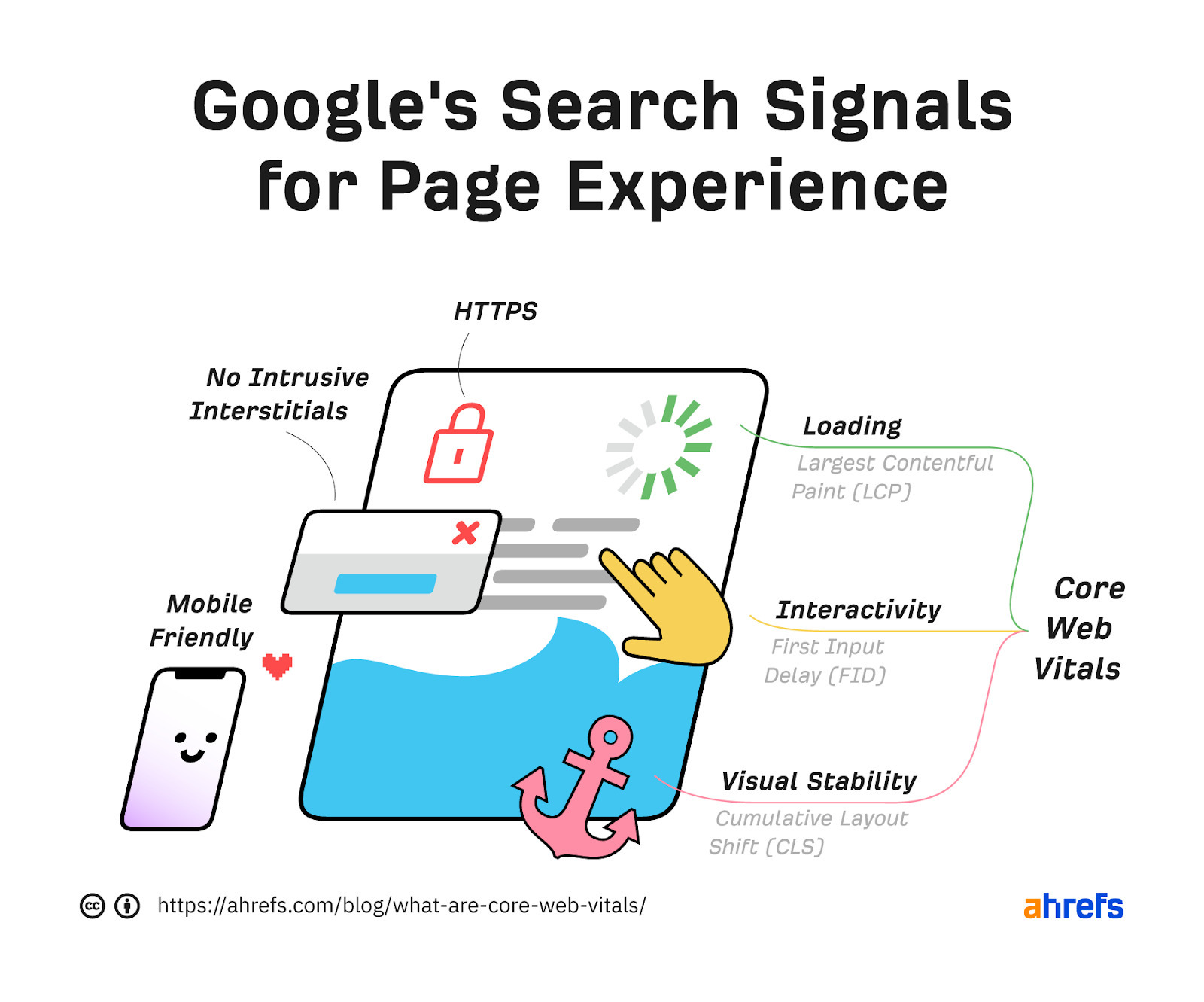

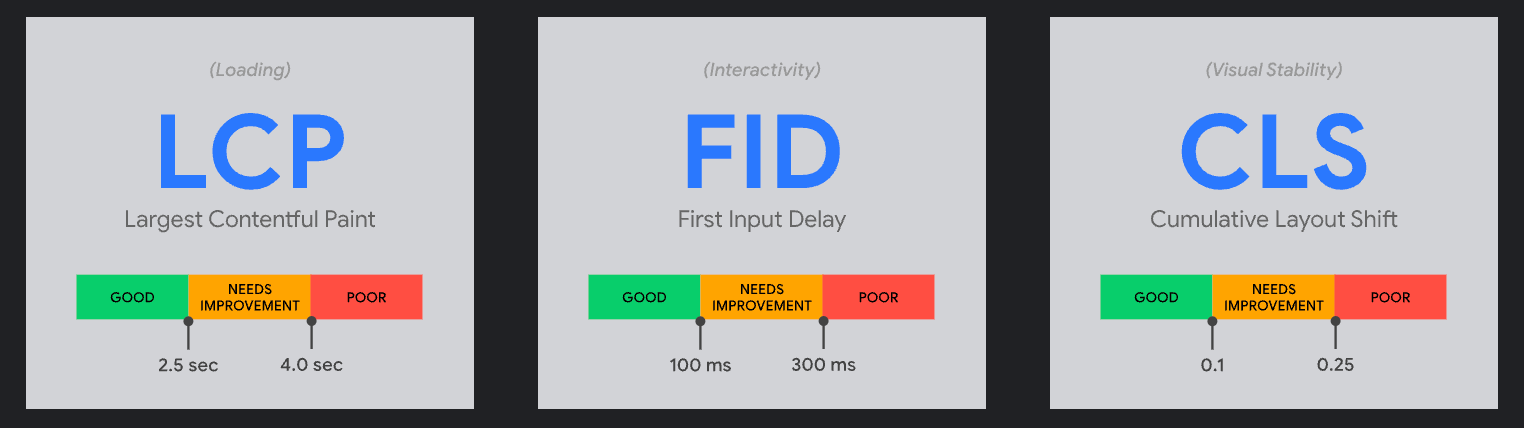

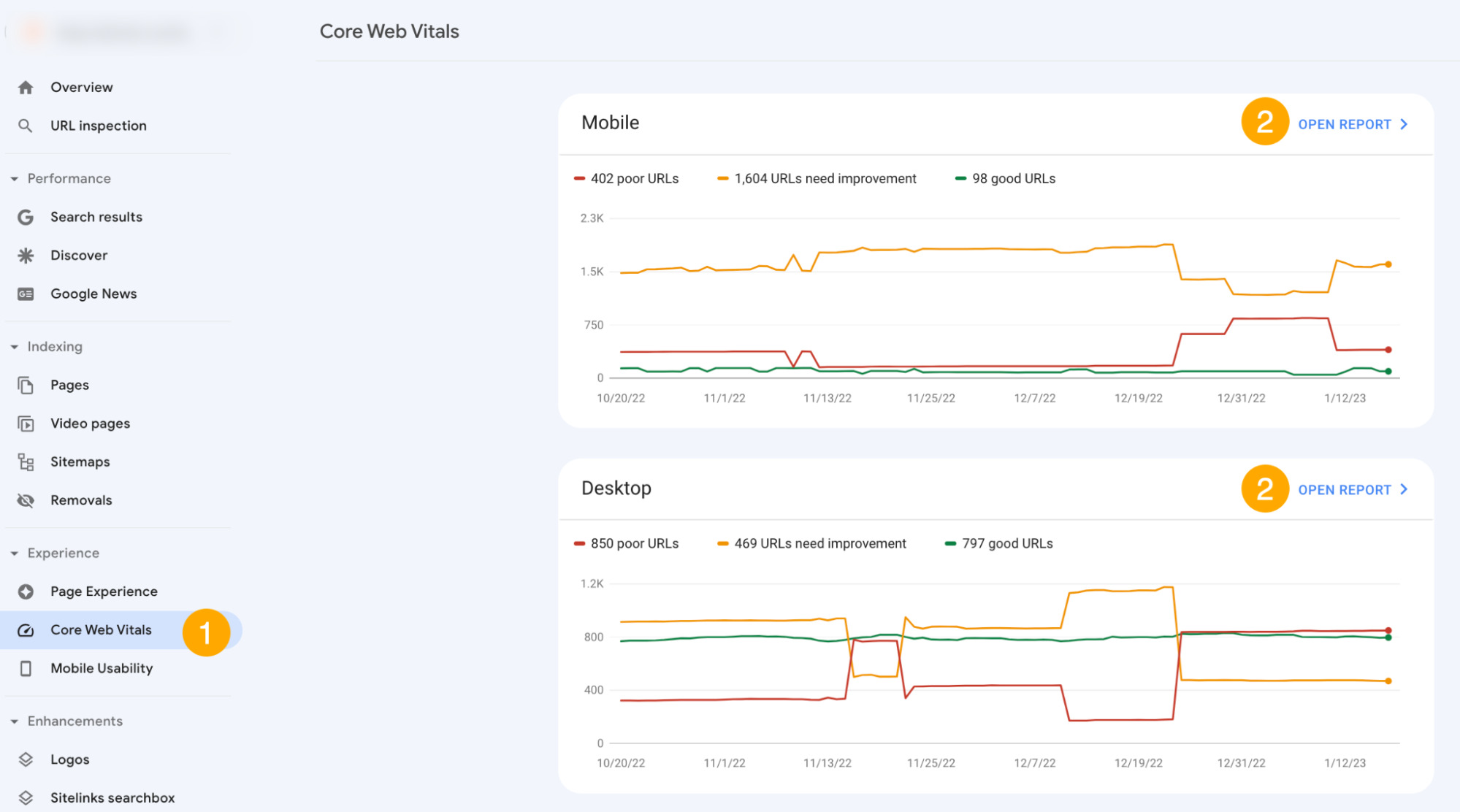

Efficiency and visible stability are different facets of Web page Expertise indicators utilized by Google to rank pages.

Google has developed a particular set of metrics to measure person expertise known as Core Internet Vitals (CWV). Website homeowners and SEOs can use these metrics to see how Google perceives their web site when it comes to UX.

Whereas web page expertise generally is a rating tiebreaker, CWV just isn’t a race. You don’t have to have the quickest web site on the web. You simply want to attain “good” ideally in all three classes: loading, interactivity, and visible stability.

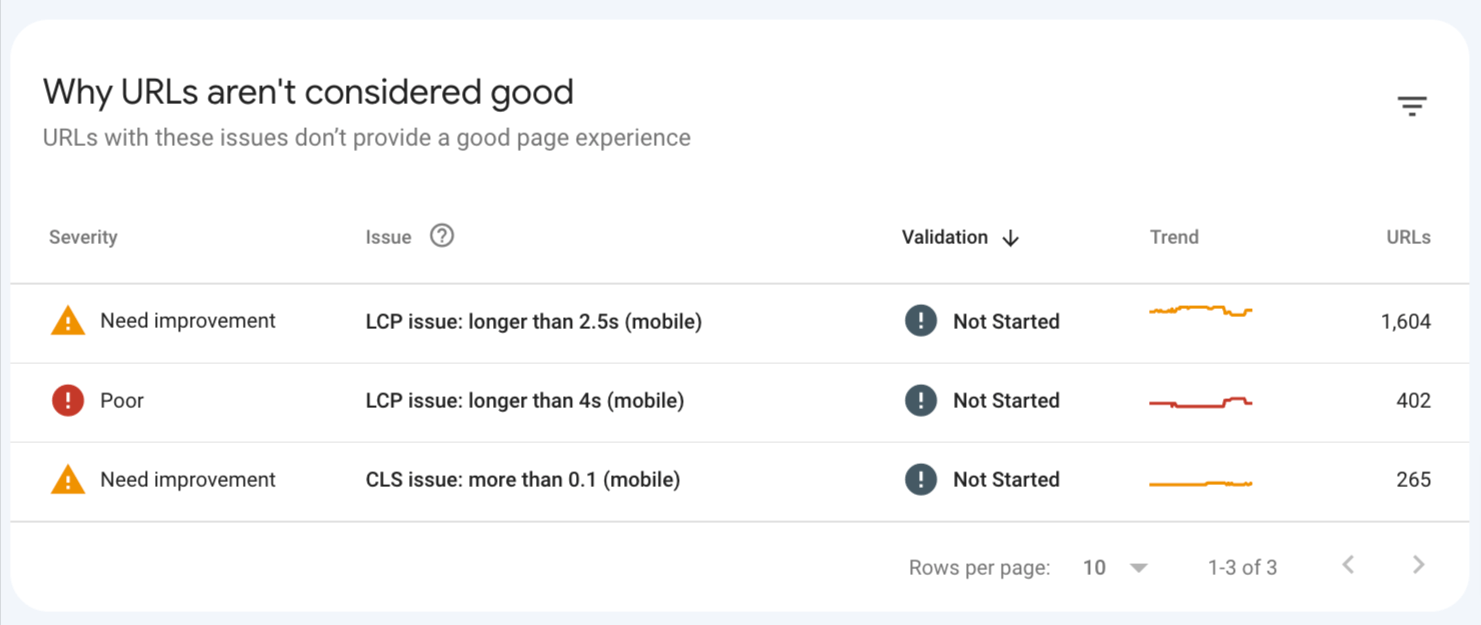

Answer

In GSC:

- First, click on on Core Internet Vitals within the Expertise part of the studies.

- Then click on Open report in every part to see how your web site scores.

- For pages that aren’t thought-about good, you’ll see a particular part on the backside of the report. Use it to see pages that want your consideration.

Optimizing for CWV could take a while. This may increasingly embody issues like transferring to a sooner (or nearer) server, compressing photographs, optimizing CSS, and so forth. We clarify how to do that within the third a part of this information to CWV.

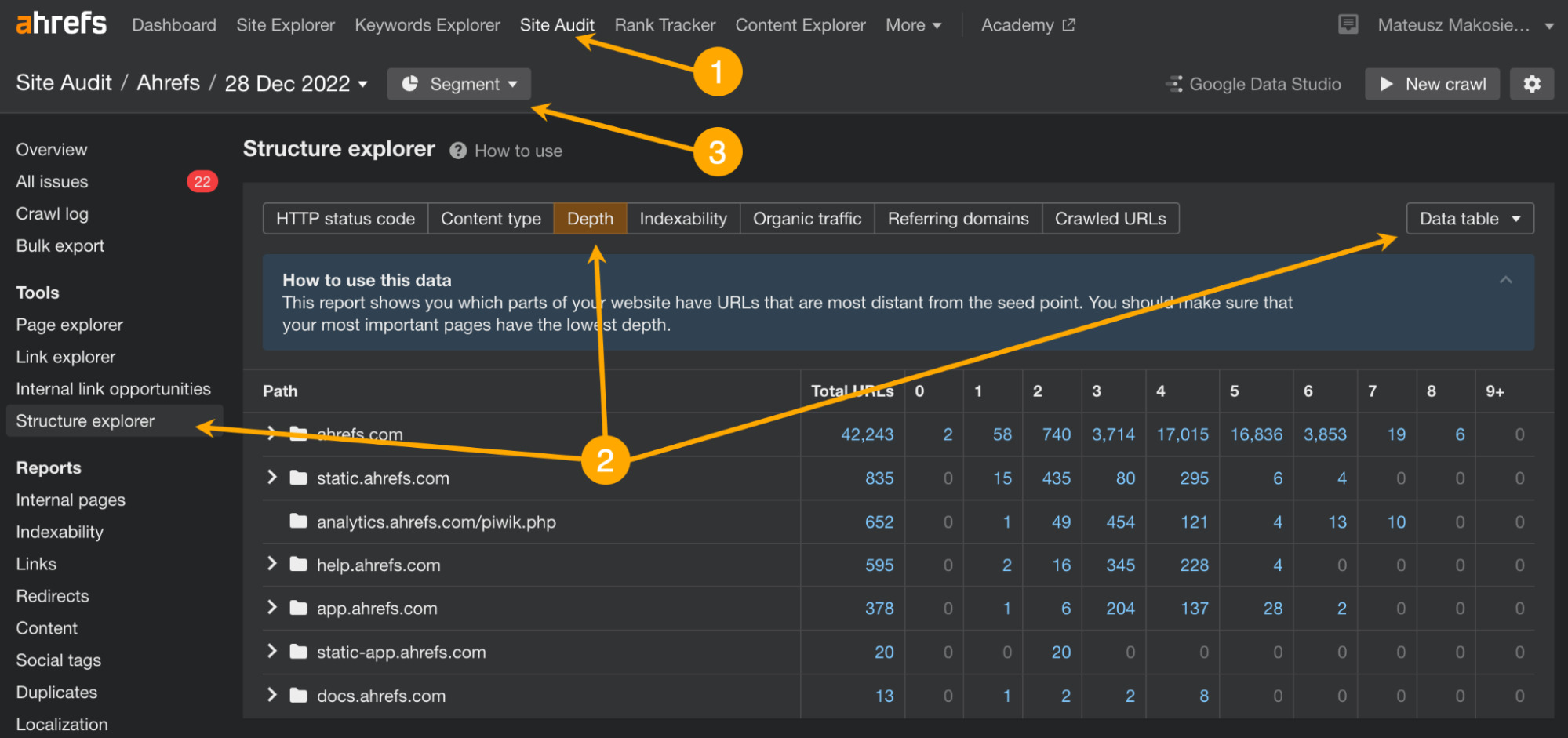

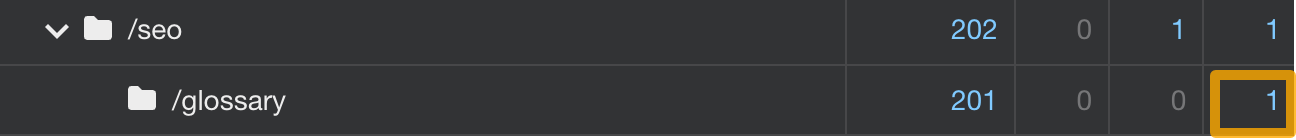

Dangerous web site construction within the context of technical search engine optimisation is especially about having necessary natural pages too deep into the web site construction.

Pages which can be nested too deep (i.e., customers want >6 clicks from the web site to get to them) will obtain much less hyperlink fairness out of your homepage (probably the web page with probably the most backlinks), which can have an effect on their rankings. It’s because hyperlink worth diminishes with each hyperlink “hop.”

Sidenote.

Web site construction is necessary for different causes too similar to the general person expertise, crawl effectivity, and serving to Google perceive the context of your pages. Right here, we’ll solely give attention to the technical facet, however you may learn extra in regards to the matter in our full information: Web site Construction: Methods to Construct Your search engine optimisation Basis.

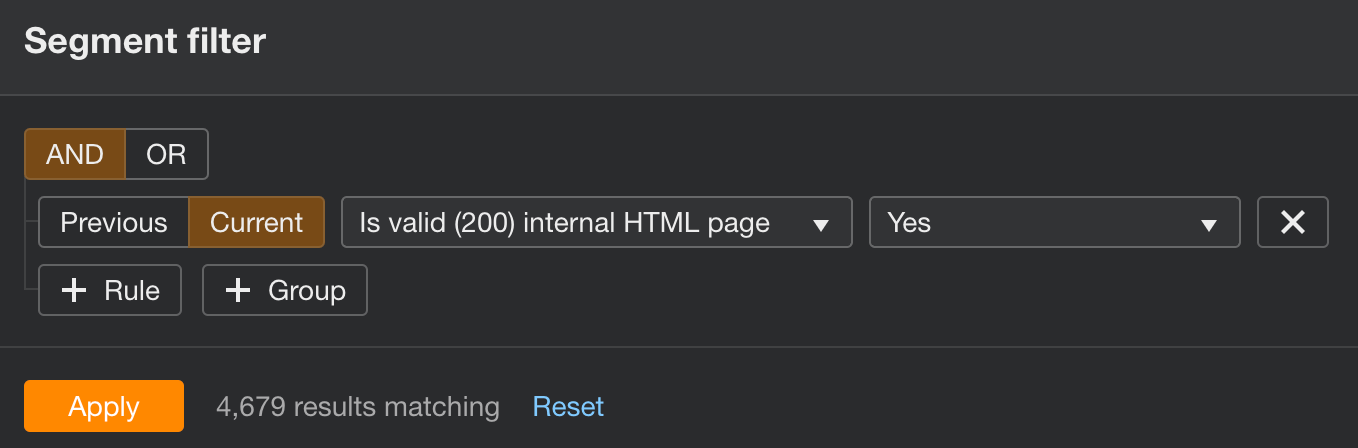

Answer

In AWT:

- Open Website Audit

- Go to Construction explorer, change to the Depth tab, and set the information sort to Information desk

- Configure the Phase to solely legitimate HTML pages and click on Apply

- Use the graph to research pages with greater than six clicks away from the homepage

The way in which to repair the problem is to hyperlink to those deeper nested pages from pages nearer to the homepage. Extra necessary pages might discover their place in web site navigation, whereas much less necessary ones might be simply linked to the pages just a few clicks nearer.

It’s a good suggestion to weigh in person expertise and the enterprise position of your web site when deciding what goes into sitewide navigation.

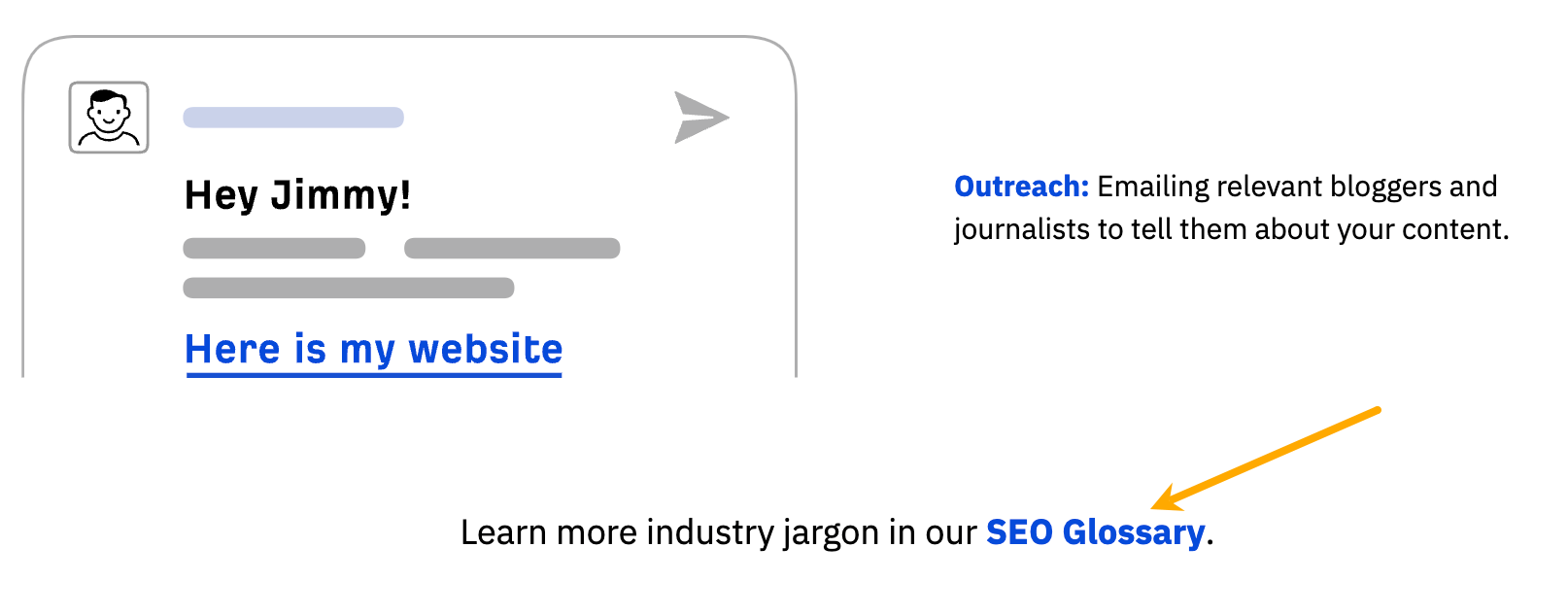

For instance, we might most likely give our search engine optimisation glossary a barely increased likelihood to get forward of natural rivals by together with it in the principle web site navigation. But we determined to not as a result of it isn’t such an necessary web page for customers who are usually not notably looking for this kind of info.

We’ve moved the glossary solely up a notch by together with a hyperlink contained in the newbie’s information to search engine optimisation (which itself is only one click on away from the homepage).

Last ideas

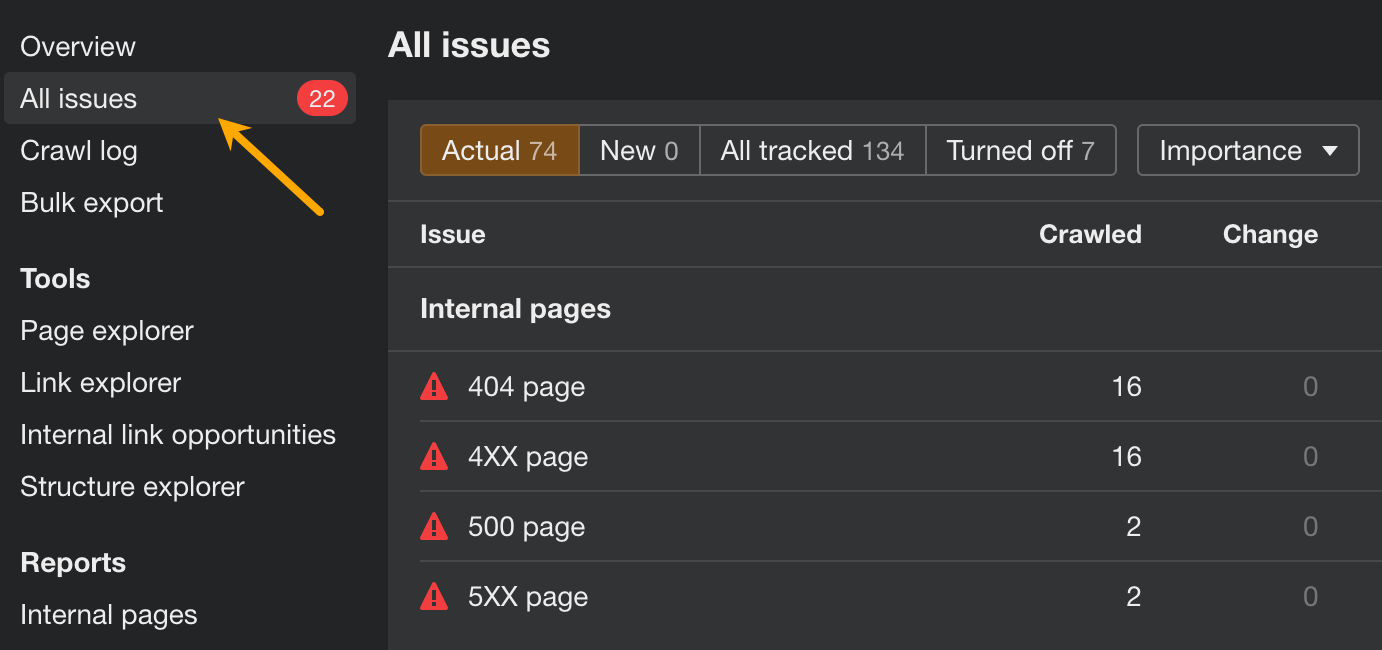

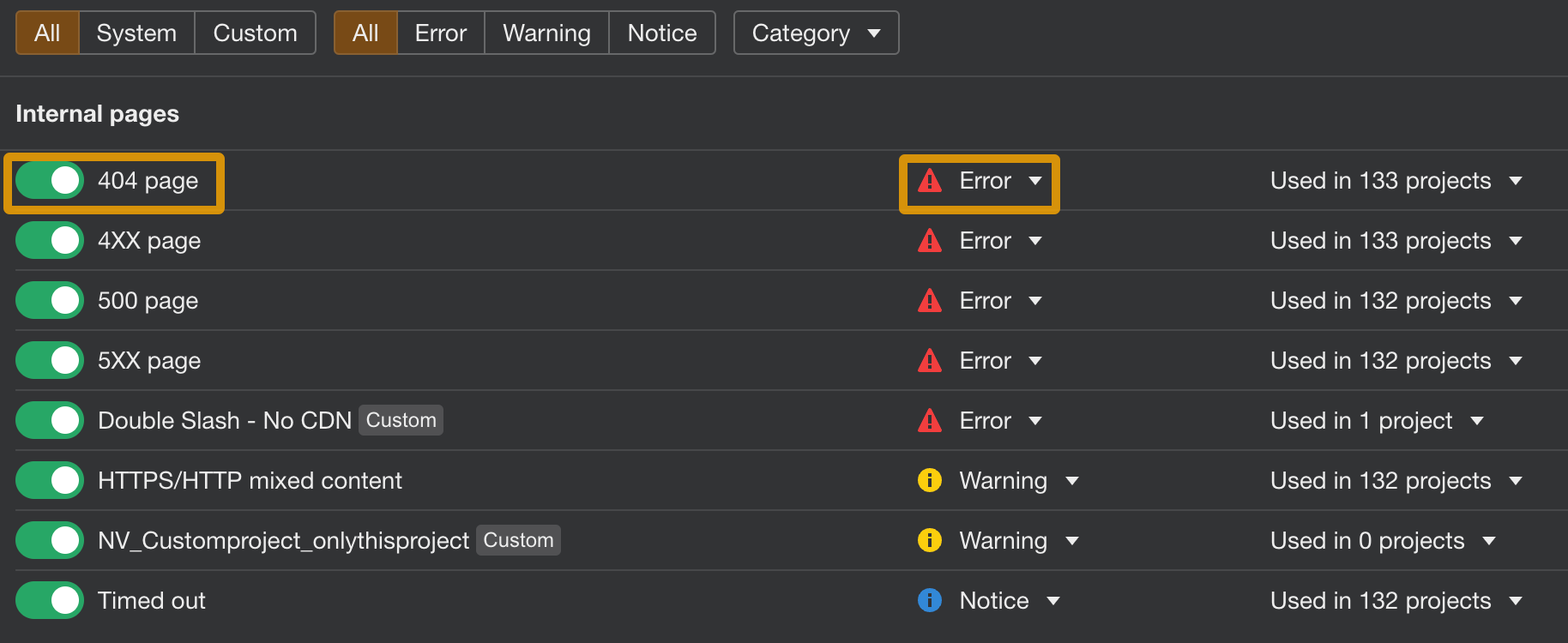

Whenever you’re carried out fixing the extra urgent points, dig somewhat deeper to maintain your web site in excellent search engine optimisation well being. Open Website Audit and go to the All points report back to see different points relating to on-page search engine optimisation, picture optimization, redirects, localization, and extra. In every case, one can find directions on easy methods to cope with the subject.

You can even customise this report by turning points on/off or altering their precedence.

Did I miss any necessary technical points? Let me know on Twitter or Mastodon.

[ad_2]

Source_link